Full reorthogonalization of each new vector against the already computed ones is computationally expensive but solves the problem.

The Lanczos matrix is symmetric tridiagonal, and its columns tend to lose orthogonality during the Lanczos iteration, resulting in inaccurate eigenvalues. This section discusses the implicitly shifted QR algorithm for computing a few eigenvalues of a large sparse symmetric matrix using the Lanczos decomposition. Eigenvalue Computation Using the Lanczos Process This is the most commonly used method for computing a few eigenvalues of a large sparse matrix. Evaluate this polynomial using the implicitly shifted QR algorithm, which requires only O ( m 2) flops. To approximate nev eigenvalues and corresponding eigenvectors, use deflation and a filter polynomial such as p r ( A ) v k ( m ) = ( A − λ n e v + 1 ( m ) I ) ( A − λ n e v + 2 ( m ) I ) … ( A − λ m ( m ) I ) v k ( m ) to provide a better restart vector. Restart until computing eigenvalue λ 1, deflate the matrix and search for λ 2, and continue until computing the desired eigenvalues. Īccept eigenvalues satisfying the error tolerance and otherwise restart Arnoldi with the current Ritz vector or some improvement of it.Accept the eigenvalues and optionally the corresponding eigenvectors that satisfy an error tolerance. Ĭompute a few eigenvalues of H m, sort them in descending order, and estimate the error of each using Equation 22.3.(mathematics) A map which maps a ~ (smaller structure) to the whole space (larger structure).However, the implementation is not simple. The orthogonal basis is rotated to align with the coordinate system, which is left unchanged. ~s are smaller vector spaces within a Rn vector space. The two types of planes are parallel planes and intersecting planes.įactor rotations make the expression of a particular ~ simpler. Planes can appear as ~s of some multidimensional space, as in the case of one of the walls of the room, infinitely expanded, or they can enjoy an independent existence on their own, as in the setting of Euclidean geometry. Generally, PCA seeks to represent n correlated random variables by a reduced set of uncorrelated variables, which are obtained by transformation of the original set onto an appropriate ~. SIR also focuses on small, so that nonparametric methods can be applied for the estimation of. SIR tries to find this -dimensional ~ of which under the model (18.10) carries the essential information of the regression between and. (Note: Double-click the graph to open and customize.). It can also find the ratio of observations and variables in the ~ of the first two components. It can reveal the projection of an observation on the ~ with the score points. He published around 16 papers including Linear operators with closed range (1968), Lifting convergent sequences with networks (1971), Sequentially barrelled spaces (1973), Inductively reflexive spaces with extended Schauder decompositions (1978), and ~s of barrelled spaces (1980). $ If we now restrict ourselves to the ~s of $\mathbb$ and is itself a real number. The continuous image of a connected space is connected.Ī metric space is Hausdorff, also normal and paracompact. "Let $A$ and $B$ be ~s of a space $S$ and suppose $\phi$ is an ambient homeomorphism taking $A$ to $B$." The point of this sentence is that $A$ and $B$ are not merely homeomorphic, but they are homeomorphic via the automorphism $\phi:S\to S$ of the space $S$.Ī compact ~ of a Hausdorff space is closed.Įvery sequence of points in a compact metric space has a convergent subsequence.

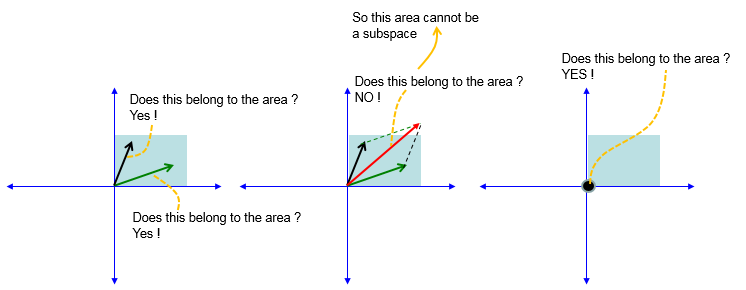

Proof of the Cauchy-Schwarz Inequality Vector Triangle Inequalityĭefining a plane in R3 with a point and normal vector. A line segment which is defined by the tangent (of a point to a curve). ~: A vector space which is the subset of another specified vector space. This term can also be used for a subset of a topological space. Let be any initial point in, and let be generated by these conjugate directions with optimal line search:Ī ~ is a subset of a vector space that is also itself a vector space. Let be the ~ spanned by vectors, which are Q- conjugate. A vector space W is a ~ of the vector space V over the field K if and only if. Ī vector ~ or linear ~ is a vector space within a higher-dimensional vector space. Suppose that is a nonempty subset of that is closed under addition and closed under scalar multiplication. Now that we understand what it means for a set to be closed under addition and scalar multiplication, we are ready for the main definition. Ĭlosure under scalar multiplication: If v is in V and c is in R, then cv is also in V. Ī subspace of R n is a subset V of R n satisfying:Ĭlosure under addition: If u and v are in V, then u + v is also in V. Professor (Mathematics) at University of California, Davis. David Cherney, Tom Denton, & Andrew Waldron